Nessun risultato

La pagina richiesta non è stata trovata. Affina la tua ricerca, o utilizza la barra di navigazione qui sopra per trovare il post.

La pagina richiesta non è stata trovata. Affina la tua ricerca, o utilizza la barra di navigazione qui sopra per trovare il post.

Whether we like it or not, generative search is reshaping how earned media shows up – or doesn’t.

One in five Google searches now trigger an AI Overview while ChatGPT, Perplexity and others are emerging as critical discovery channels, especially for younger audiences.

For PR, this is huge.

Generative engines value exactly what we bring: credible storytelling, authoritative sources, brand narrative. And for the first time, we can actually prove our impact beyond vanity metrics.

But there’s a growing problem.

As GEO gains traction, we’re seeing a flood of new software providers and metrics hitting the market. Share of Model, AI Favorability, and Citation Indexes are just a few of the metrics being introduced. Yet there’s still very little guidance on how to properly set up AI tracking programs.

If we’re not careful, GEO measurement will become another messy, opaque system with no shared standards; and we’ll end up missing this train the way we did with SEO.

So, for my first blog on the MAD Universe platform, I want to share what I’ve learned about measuring generative search in a way that’s transparent and defensible.

I don’t claim to be the ultimate authority here, and I’d obviously encourage you to do your own research and challenge my thinking. What follows is based on my own experience, testing, research, and conversations with peers.

Let’s dive in.

Before we discuss metrics and best practices, it’s important to align on the current landscape – and the core challenge facing generative search measurement today.

The critical limitation with most LLM platforms is simple: we don’t have access to real user prompts.

Without prompt-level data, measurement relies on synthetic prompts – queries constructed by analysts, or, even worse, by LLMs themselves, based on assumptions about how people might search within a category.

That’s where things start to break down.

Human language is complex. Impossibly complex. James Crawford explained this brilliantly in his Prove Me Wrong paper. A simple 12-word query, where each word has just 20 plausible alternatives creates over four quadrillion possible prompt variations (20¹²).

In other words, the combinatorial explosion makes synthetic prompts fundamentally unreliable as a basis for measurement.

That doesn’t mean synthetic prompts are useless. They have a role but they don’t belong in a measurement framework.

They are experiments, not benchmarks. Helpful for learning, not for tracking performance over time. Treating them as core input introduces noise into your measurement framework.

So, what can you do instead?

If you want to measure the impact of earned media on generative search today, start with Google AI Overviews (GAIO).

Google AI Overviews is the natural foundation for three reasons:

Once you anchor your measurement in GAIO, the next step is simple: test your queries for AI visibility.

If SEO isn’t already part of your measurement programme and you don’t yet have queries to test, a good rule of thumb is to include:

You don’t need hundreds of queries. In most categories, 20–50 well-chosen queries will give you more insight than a bloated list of 500. Choose ruthlessly.

Once you have your query set, measurement starts with two simple checks:

That’s your inclusion rate – the baseline everything else builds on. It tells you whether your brand is even part of the conversation before you start analysing how it’s being talked about.

If you’re not included:

You’re simply not there.

Before we dive into the metrics and KPIs you should be measuring against, it is important to mention that GEO measurement require a small mindset shift to get insights you can actually trust.

AI Overviews – and large language models more broadly – don’t behave like a static database. Ask the same question on different days, or even just a few hours apart, and the answer can change slightly. Sources may rotate, brands may appear or disappear, and citations can shift order.

This is the reason why you might have heard or read about variance and confidence when it comes to GEO measurement. These two concepts simply refer to:

This kind of variability is more prominent in conversational LLMs, where responses are generated through probabilistic sampling. Google AI Overviews are generally more stable by design, as they’re grounded in live search results and ranking systems – but they’re still not fixed. Shifts in retrieval, source availability, or query interpretation can still lead to subtle changes over time.

In order to account for variability and add confidence levels to your insights, you simply need to run queries multiple times, instead of running a single point-in-time analysis.

You don’t need statistical perfection or to overcomplicate things. Just 3–5 runs per query over your reporting period usually hits the sweet spot.

It might sound like a chore, but it’s essential if you want insights you can actually trust. Individually, each run can be noisy. Taken together, though, they reveal the real patterns.

And that changes how you talk about your results. Instead of saying:

“This domain drives AI visibility,”

You can say:

“This domain appeared in 70% of runs across eight priority queries this month.”

That’s a materially stronger, more defensible insight.

With consistent presence established, you can move beyond single-query observations and start examining the patterns that shape your AI visibility – the sources that appear most often, the diversity of citations, and the effectiveness of your communications.

Now that we’ve established the rules of the game, we can move on to what to measure.

Every time your brand appears, it’s because Google’s model has decided that certain sources are credible enough to support the answer.

Citations are essentially the bridge between PR and AI visibility. It’s where PR impact becomes observable.

So, for every run where AI Overview appear, you need to log:

These three data points let you go beyond simple vanity metrics. They allow you to measure not only whether your brand appears, but also how resilient that visibility is over time.

To measure this resilience, you need to calculate citation diversity: the number of different domains supporting your brand across runs. You can calculate this easily in Excel with a COUNTUNIQUE formula. The more sources that contribute, the less your visibility depends on any single site, and the stronger and more reliable your AI presence becomes.

Breaking this down by source type (news, trade, reviews, or owned content) also shows where coverage is concentrated and where gaps exist.

Layering recurrence with diversity transforms raw data into actionable insight. When you look at it this way, gaps become obvious. For example:

You can also identify diminishing returns. If a domain already appears in most runs, additional coverage there may add limited incremental value. By contrast, earning visibility from a new, trusted source can materially improve citation diversity and resilience.

This is the point where GEO measurement stops being purely observational and starts informing strategy.

To recap, here’s how your GAIO measurement programme should look in practice:

Foundational Metrics:

Visibility Metrics:

Authority Metrics:

Together, these metrics show how your visibility is built, how you compare to competitors, and which sources actually power your presence.

So far, we’ve focused heavily on Google AI Overviews (GAIO) – and for good reason. As mentioned, GAIO is currently the only environment where GEO measurement can be anchored to real user search behavior. It’s where we can see what people are actually searching, not just what we assume they are searching.

But generative discovery is expanding fast across the wider LLM ecosystem. Platforms like ChatGPT, Gemini, Claude, or Perplexity are becoming critical spaces for learning and exploration. How should you think about them when building a measurement programme?

The short answer is you can track pretty much the same metrics but the focus shifts. Instead of performance KPIs, LLM monitoring is best for directional listening, learning, exploring, and extending the insights you already get from GAIO. Don’t use them as KPIs.

The tricky part is building a prompt universe that supports exploration while staying grounded in real search behavior rather than pure theatre.

Here’s where GAIO, or rather Google, comes back in: the prompts that generate AI Overviews give you a real starting point. They reflect actual user behavior, which you can then adapt into a structured framework.

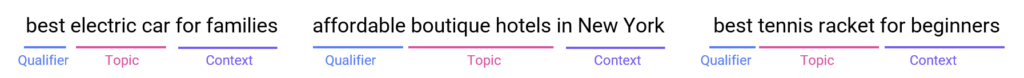

To build an effective prompt set, start with real search data. Look at the queries people are actually using in your category, break them down into their key components – topic, qualifier, context, and decision stage – and then systematically combine them.

For example:

With some basic Excel skills, much of this can be automated: export query data from Google Search Console or your SEO platform, analyse recurring patterns, and create separate columns for each component. High-frequency terms reflect real user behavior and become the building blocks of your prompt set.

From there, layering qualifiers, topics, context, and optional decision phrases produces a synthetic prompt universe that’s still structured around real user behavior. It may be synthetic, but because it’s grounded in observed patterns, it’s far more meaningful than pure guesswork.

Once you’ve created your prompt universe, you need to organise it effectively. You could use traditional frameworks like awareness/consideration/decision/retention stages, or more modern approaches. The one I’ve found most practical is the intent archetypes framework.

This framework groups prompts by what users are trying to achieve rather than just by topic. That approach mirrors how LLMs actually interpret queries. Traditional search works like an index: you enter a query, Google scans millions of pages, and returns the closest textual matches. Generative AI is different. It does not just match words, it interprets the underlying intent:

Is the user comparing options?

Validating a decision?

Assessing risks?

Learning the basics?

Intent archetype frameworks typically cluster prompts into five core categories, but they are not prescriptive and can be adapted to fit your industry or product category.

The traditional use cases are:

By starting with real queries, breaking them into components, and mapping them through an intent framework, you get a structured, repeatable process that can sit credibly inside a broader measurement programme – one that extends your findings and adds directional insight without overclaiming or presenting results as hard KPIs.

The framework outlined in this blog isn’t final. It’s a starting point designed to be refined, challenged, and improved by the community.

AI search in its current form is barely two years old. Platforms will evolve, models will improve, and new metrics will emerge. Already, tools like Profound and Answer the Public are starting to surface real user prompts for LLMs, hinting at a future where we can measure across multiple AI platforms, not just Google.

The guiding principles, however, remain timeless: focus on what matters, be transparent about how you measure it, and share with the community.

If we stick to these simple principles, we will move GEO measurement from buzzword to discipline – one that grows stronger and more actionable with every insight.